Sponsored By

News

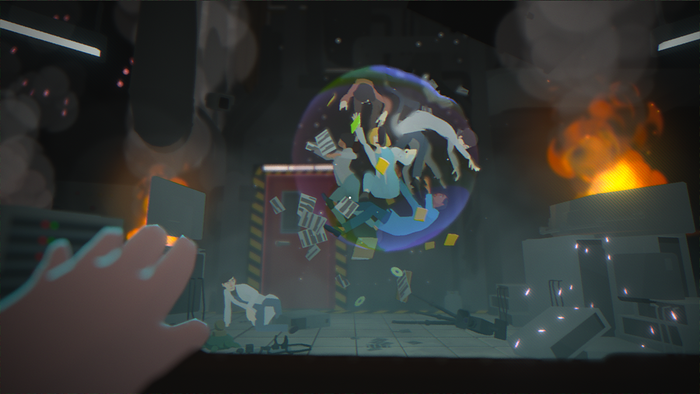

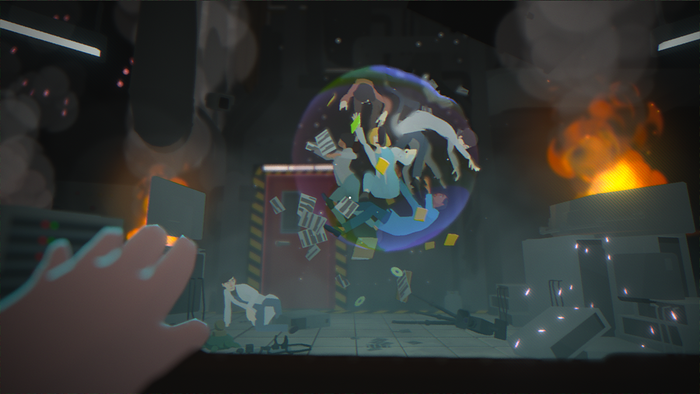

A screenshot from Goodnight Universe showing a bundle of scientists trapped in a floating anomoly

Business

Before Your Eyes writer-directors open new studio Nice DreamBefore Your Eyes writer-directors open new studio Nice Dream

The LA-based studio is working on a spiritual successor to Before Your Eyes called Goodnight Universe.

Daily news, dev blogs, and stories from Game Developer straight to your inbox